July 7, 2015 / Arlin Stoltzfus / 9 Comments

The return of mutationism to mainstream evolutionary biology is evident in the way mainstream articles now describe the role of mutation in evolution, in our reliance on mathematical models that evoke a mutationist view, and in evo-devo research programs that focus on identifying causative major-effect mutations.

This shift has happened in a kind of sub-conscious way, without commentary or reflection. I’ll comment below on the reasons for that.

My main purpose here is to contrast way that the neo-Darwinian and mutationist views refer implicitly to two different regimes of population genetics evoked in two styles of self-service restaurant: the buffet and the sushi conveyor.

(more…)

May 5, 2015 / Arlin Stoltzfus / 11 Comments

Over at Sandwalk, Larry Moran posted some interesting bits rrom his molecular evolution class exam, including a passage from Mike Lynch arguing for his claim that “nothing in evolution makes sense except in the light of population genetics”. In this passage, which I’ll quote below, Lynch says that evolution is governed by 4 fundamental forces.

The idea that evolution is governed by population-genetic “forces” is common but fundamentally mistaken. I wish we could just put this to rest.

(more…)

April 17, 2015 / Arlin Stoltzfus / 0 Comments

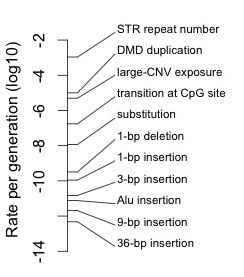

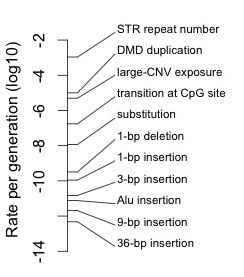

To understand the potential role of mutation in evolution, it is important to understand the enormous range of rates for different types of mutations. If one ignores this, and thinks of “the mutation rate” as a single number, or if one divides mutation into point mutations with a characteristic rate, and other mutations that are ignored, one is going to miss out on how rates of mutation determine what kinds of changes are more or less common in evolution. It would be like allowing a concept of fitness, then subverting its utility by distinguishing only two values, viable and inviable. [1]

(more…)

April 16, 2015 / Arlin Stoltzfus / 7 Comments

Last year Sahotra Sarkar published a paper that got me thinking. His piece entitled “The Genomic Challenge to Adaptationism” focused on the writings of Lynch & Koonin, arguing that molecular studies continue to present a major challenge to the received view of evolution, by suggesting that “non-adaptive processes dominate genome architecture evolution”.

The idea that molecular studies are bringing about a gradual but profound shift in how we understand evolution is something I’ve considered for a long time. It reminds me of the urban myth about boiling a frog, to the effect that the frog will not notice the change if you bring it on slowly enough. Molecular results on evolution have been emerging slowly and steadily since the late 1950s. Initially these results were shunted into a separate stream of “molecular evolution” (with its own journals and conferences), but over time, they have been merged into the mainstream, leading to the impression that molecular results can’t possibly have any revolutionary implications (read more in a recent article here).

Frog on a saucepan (credit: James Lee; source: http://en.wikipedia.org/wiki/Boiling_frog)

(more…)

April 14, 2015 / Arlin Stoltzfus / 8 Comments

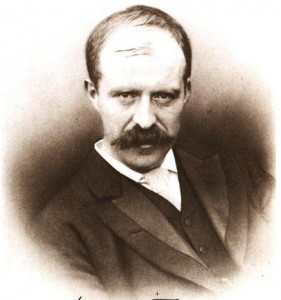

Debates on “gradualism” in evolutionary biology address the size distribution of evolutionary changes. The classical Darwinian position, better described as “infinitesimalism”, holds that evolutionary change is smooth in the sense of being composed of an abundance of infinitesimals (not one infinitesimal at a time, but a blending flow of infinitesimals). The alternative is that evolution sometimes involves “saltations” or jumps, i.e., distinctive and discrete steps. The dispute between these two positions has been a subject of acrimony at various times in the 20th century, with several minor skirmishes, and a larger battle with at least one genuine casualty (image).

Walter Frank Rafael Weldon (public domain image from wikipedia). Weldon ignored an advancing illness and worked himself to death (1906) poring over breeding records in an attempt to cast doubt on discrete inheritance. Along with Pearson and other “biometricians”, Weldon held to Darwin’s non-Mendelian view combining gradual hereditary fluctuations with blending inheritance.

Today, over a decade into the 21st century, we have abundant evidence for saltations, yet the term is virtually unknown, and we still seem to invoke selection under the assumption of gradualism. Are we saltationists, or not? I’m going to offer 3 answers below.

But first, we need to review why the issue is important for evolutionary theory.

(more…)

March 2, 2015 / Arlin Stoltzfus / 1 Comment

In a previous post called “The revolt of the clay“, I described four different ways to think about the role of variation as a process with a predictably non-random impact on the outcome of evolution. The main point was to draw attention to my favorite idea, about biases in the introduction of variation as a source of orientation or direction, and to provide a list of what (IMHO) represents the best evidence for this idea. I gave anecdotes from four categories of evidence

- mutation-biased laboratory adaptation

- mutation-biased parallel adaptation in nature

- recurrent evolution of genomic features

- miscellaneous evo-devo cases such as worm sperm

With the publication of a study by Couce, et al., 2015 (a team of researchers from Spain, France and Germany), the first category just got stronger. Couce, et al did an experiment that I’ve been trying to talk experimentalists into doing for a long time: they directly compared the spectrum of lab-evolved changes between two strains with a known difference in mutation spectrum.

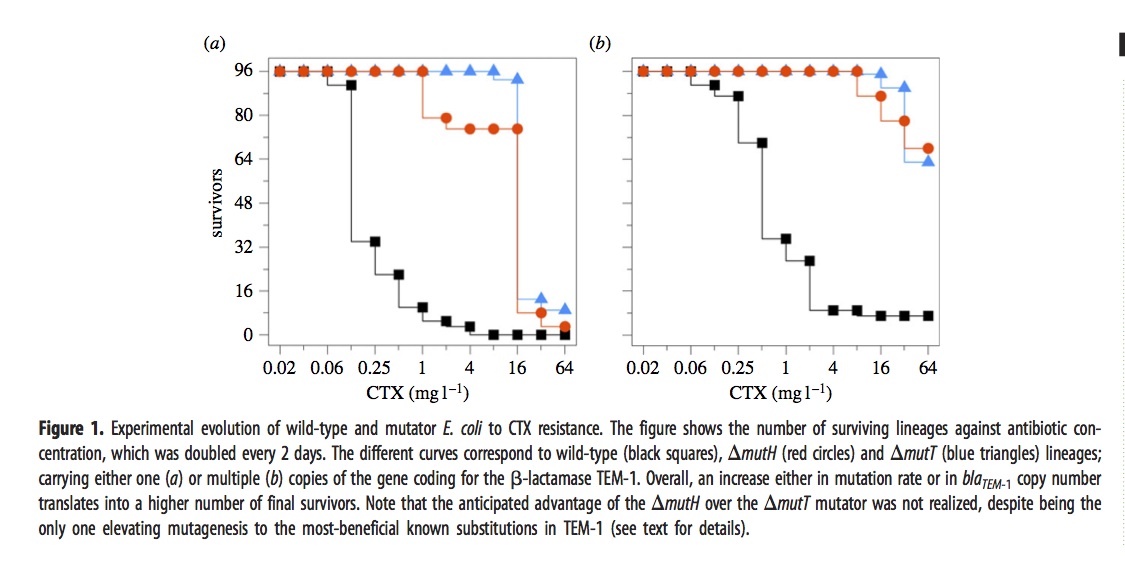

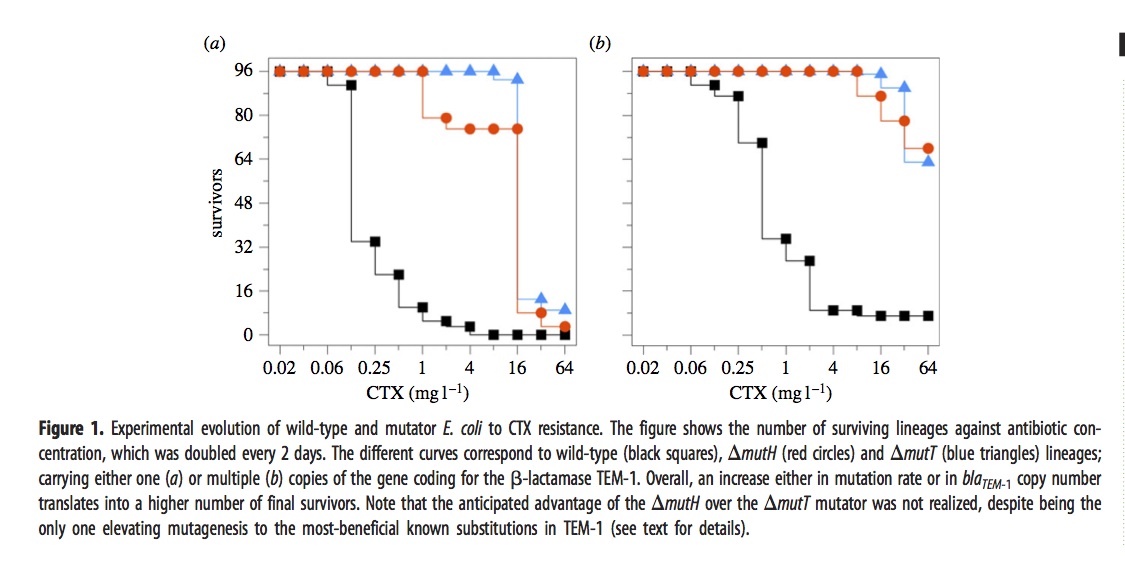

In brief, they exposed 576 different lines of E. coli to a dose of cefotaxime that started well below the MIC and doubled every 2 days for 12 iterations. The evolved lines fell into 6 groups depending on 3 choices of genetic background— wild-type, mutH, or mutT— and 2 choices of target gene copy number— just 1 copy of the TEM-1 gene, or an extra ~18 plasmid copies.

The ultimate dose of antibiotic was so extreme that most of the lines went extinct. The figure shows how many of the 96 lines (for each condition) remain viable at a given concentration.

The mutators were chosen because they have different spectra. The “red” mutator (mutH) greatly elevates the rate of G –> A transitions, and also elevates A –> G transitions. The “blue” mutator (mutT) elevates A –> C transversions. The figure above indicates that the blue mutator fared slightly better. A much stronger effect is that the lines with multiple copies of the TEM-1 gene did better. Very few single-copy strains survived the highest doses of antibiotic.

The authors then did some phenotypic characterization that led them to suspect that it was not just the TEM-1 gene, but other genes that were important in adaptation. In particular, they suspected the gene for PBP3, which is a direct target of cefotaxime.

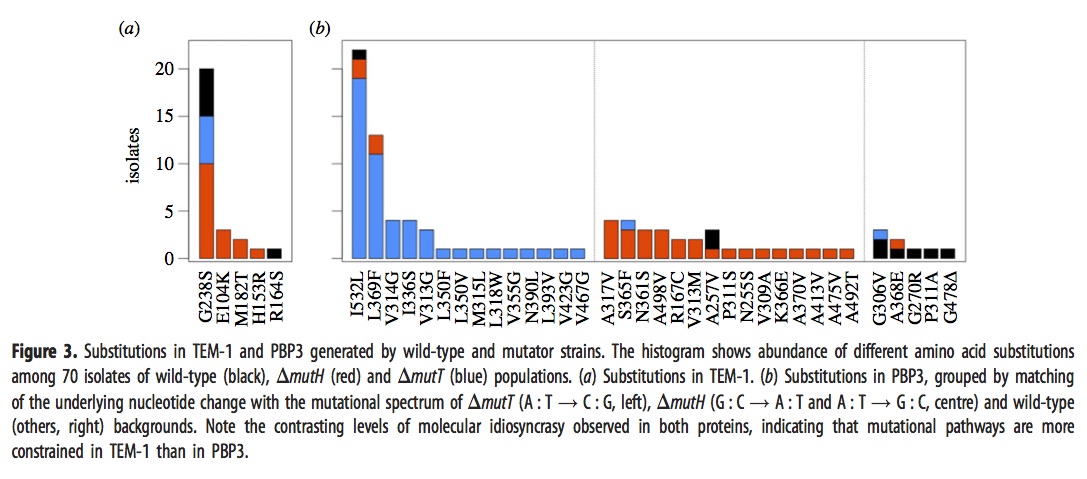

They sequenced the genes for TEM-1 and PBP3 from a large sample of the surviving strains above, with results shown in this figure:

The left panel, i.e., figure 3a, shows that evolved strains of mutH (red), mutT (blue), and wild-type (black) sometimes have one of the well-known mutants in the TEM-1 gene encoding beta-lactamase. The predominance of red means that more mutH strains evolved the familiar TEM-1 mutations, which makes sense because these mutations happen to be the G–>A and A–>G transitions favored by mutH.

Panel 3b, which refers to mutants in the gene encoding PBP3, is a bit complicated. As before, the histogram bars are colored based on the background in which the particular mutation listed on the x axis is fixed. However, rather than showing all the lines together in one histogram, they are arranged into 3 different histograms depending on whether the mutational pathway matches the pathways preferred by mutT (left sub-panel), by mutH (center sub-panel), or neither (right sub-panel). For instance, blue appears in 16 columns, so there are 16 different mutant PBP3 types that evolved in mutT backgrounds, and 14 of them are in the left sub-panel indicating mutT-favored mutational pathways. Red appears in 18 columns, 15 corresponding to mutH-favored pathways.

Importantly, the density in the columns doesn’t overlap much: nearly all of the blueness is in the left histogram (~50/52), and nearly all the redness is in the center histogram (~26/31). This means that evolved mutT strains overwhelmingly carried mutations favored by the mutT mutators, and likewise for mutH strains and mutH mutators. If adaptation were unaffected by tendencies of mutation, as the architects of the Modern Synthesis believed, then the colors would be randomly scrambled between the 3 histograms. Because it is not, we know that the regime of mutation imposes a predictable bias on the spectrum of adaptive changes.

The main weakness of the paper, perhaps, is that they did not do reconstructions to show causative mutations. Instead they make plausibility arguments based on having seen some of these in other studies of laboratory or clinical resistance. I think the paper is pretty strong in spite of this. Recurrence of a mutation in independent lines is a very strong sign that it is causative, whereas singletons might be hitch-hikers with no causative significance. Yet if we were to eliminate the 17 non-recurring mutants, we would still have a striking contrast. Of ~43 mutT mutants with recurrently fixed mutations, ~41 are for mutT-favored pathways; of the ~23 mutH mutants with recurrently fixed mutations, ~18 are for mutH-favored pathways.

Unfortunately, due to the language and framing of this paper, casual readers might not recognize that it is actually about mutation-biased adaptation. The term “mutation bias” only occurs once, in the claim that “Mutational biases forcibly impose a high level of divergence at the molecular level.” I’m not sure what that means. What I would describe as “evolution biased by mutation” is described by Couce, et al as “limits to natural selection imposed by genetic constraints”. Sometimes the language of my fellow evolutionists seems like a strange and foreign language to me. But I digress.

To summarize, this work clearly establishes that 2 different mutator strains adapting to increasing antibiotic concentrations exhibit strikingly different spectra of evolved changes that match their mutation spectra, consistent with the a priori expectation of mutation-biased adaptation.

January 29, 2015 / Arlin Stoltzfus / 2 Comments

A “chance” encounter

Earlier this month I was contacted by a reporter writing a piece on the role of chance in evolution. I responded that I didn’t work on that topic, but if he was interested in predictable non-randomness due to biases in variation, then I would be happy to talk. We had a nice chat last Friday.

I’m only working on the role of “chance” in the sense that, in our field, referring to “chance” is a placemarker for the demise of an approach based implicitly on deterministic thinking— evolution proceeds to equilibrium, and everything turns out for the best, driven by selection. This justifies the classic view that “the ultimate source of explanation in biology is the principle of natural selection” (Ayala, 1970). Bruce Levin and colleagues mock this idea hilariously in the following passage from an actual research paper:

To be sure, the ascent and fixation of the earlier-occurring rather than the best-adapted genotypes due to this bottleneck-mutation rate mechanism is a non-equilibrium result. On Equilibrium Day deterministic processes will prevail and the best genotypes will inherit the earth (Levin, Perrot & Walker, 2000)

Wait, I’m still laughing. (more…)

November 24, 2014 / Arlin Stoltzfus / 1 Comment

Conceptual frameworks guide our thinking

Our efforts to understand the world depend on conceptual frameworks and are guided by metaphors. We have lots of them. I suspect that most are applied without awareness. If I am approaching a messy problem for the first time, I might begin with the idea that there are various “factors” that contribute to a population of “outcomes”. I would set about listing the factors and thinking about how to measure them and quantify their impact. This would depend, of course, on how I defined the outcome and the factors.

Let’s take a messy problem, like the US congress. How would we set about understanding this? I often hear it said that congress is “broken”. That has clear implications. It suggests there was a time when congress was not broken, that there is some definable state of unbrokenness, and that we can return to it by “fixing” congress. By contrast, if we said that congress is a cancer on the union, this would suggest that the remedy is to get rid of congress, not to fix it.

I also often hear that the problem is “gridlock”, invoking the metaphor of stalled traffic. The implication here is that there should be some productive flow of operations and that it has been halted. This metaphor is a bit more interesting, because it suggests that we might have to untangle things in order to restore flow, and then congress would pass more laws. By contrast, this kind of suggestion is sometimes met with the response that the less congress does, the better off we are. If this is our idea of “effectiveness”, then our analysis is going to be different.

Conceptualizations of the role of variation

How do evolutionary biologists look at the problem of variation? How do their metaphors or conceptual frameworks influence the kinds of questions that are being asked, and the kinds of answers that seem appropriate?

Here I’d like to examine— briefly but critically— some of the ways that the problem of variation is framed.

Bauxite, the main source of aluminum, is an unrefined (raw) ore that often contains iron oxides and clay (image from wikipedia)

Raw materials

The most common way of referring to variation is as “raw materials.” What does it mean to be a raw material? Picture in your mind some raw materials like a pile of wood pulp, a mound of sand, a field scattered with aluminum ore (image), a train car full of coal, and so on, and you begin to realize that this is a very evocative metaphor. Raw materials are used in abundance and are “raw” in the sense of being unprocessed or unrefined. Wool is a raw material: wool processed and spun into cloth is a material, but not a raw material.

What is the role of raw materials? Dobzhansky said that variation was like the raw material going into a factory. What is the relation of raw materials to factory products? Raw materials provide substance or mass, not form or direction. Given a description of raw materials, we can’t really guess the factory product (image: mystery raw materials). Raw materials are a “material” cause in the Aristotelean sense, providing substance and not form. This is essential to the Darwinian view of variation: selection is an agent, like the potter that shapes the clay, while variation is a passive source of materials, like the clay.

These are the raw materials for what manufactured product? See note 1 for answers and credits

What kinds of questions does this conceptual framework suggest? What kinds of answers? If we think of variation as raw materials, we might ask questions about how much we have, or how much we need. Raw materials are used in bulk, so our main questions will be about how much we have.

This reminds us of the framework of quantitative genetics. In the classical idealization, variation has a mean of zero and a non-zero variance: variation has an amount, but not a direction. Nevertheless, the multivariate generalization of quantitative genetics (Lande & Arnold) breaks the metaphor— in the multivariate case, selection and the G matrix jointly determine the multivariate direction of evolution.

Chance

The next most-common conceptual framing for talking about the role of variation is “chance”. What do we mean by chance? I have looked into this issue and its a huge mess.

BTW, I have experienced several scientists pounding their chests and insisting that “chance” in science has a clear meaning that applies to variation, and that we all know what it is. Nonsense. The only concept related to chance and randomness that has a clear meaning is the concept of “stochastic”, from mathematics, and it is purely definitional. A stochastic variable is a variable that may take on certain values. For instance, we can represent the outcome of rolling a single die as a stochastic variable that takes on the values 1 to 6. That is perfectly clear.

However, is the rolling of dice a matter of “chance”? Are the outcomes “random”? These are two different questions, and they are ontological (whereas “stochastic” is abstract, merely a definition). Often “chance” can be related to Aristotle’s conception of chance as the confluence of independent causal streams. To say that variation is a matter of “chance” is to say that it occurs independently of other stuff that we think is more important. Among mathematicians, randomness is a concept about patterns, not causes or independence. To say that variation is random is to say that it has no discernible pattern.

What kinds of questions or explanations are prompted by this framework? One might say that it does not provide us with much guidance for doing research. I would argue that it provides a very strong negative guidance: don’t study variation, because it is just a matter of chance.

But the same doctrine has a very obvious application when we are constructing retrospective explanations. If evolution took a particular path dependent on some mutations happening, then the path is a matter of “chance” because the mutations are a matter of chance. We would say that evolution depends on “chance.” This kind of empty statement is made routinely by way of interpreting Lenski’s experiments, for instance.

This framework also inspires skeptical questions, prompting folks to ask whether variation is really “chance”. This skepticism has been constant since Darwin’s time. But the claims of skeptics are relatively uninformative. Saying that variation is not a matter of chance tells us very little about the nature of variation or its role in evolution.

Constraints

According to the stereotype, at least, academics value freedom. Who would have guessed that they would so willingly embrace the concept of “constraints”?

In this view, the role of variation is like the role of handcuffs, preventing someone from doing something they might otherwise do. Variation constrains evolution. Or sometimes, variation is said to constrain selection.

What kinds of hypotheses, research projects, or explanations does this framework of “constraints” suggest?

To show that a constraint exists, we would need to find a counter-example where it doesn’t. So the constraints metaphor encourages us to look for changes that occur in one taxonomic context, but not another. Once we find zero changes of a particular type in taxon A, and x changes in taxon B, we have to set about showing that the difference between zero and x is not simply sampling error, and that the cause of the difference is a lack of variation.

The Pat Tillman memorial bridge in a state of partial completion. How do we know it is incomplete?Originally posted to Flickr by David Jones http://flickr.com/photos/45457437@N00/4430518713.

For this reason, the image of handcuffs is perhaps misleading. A better image would be a pie that is missing a slice, or perhaps an unfinished bridge (image). The difference matters for 2 reasons. First, handcuffs actually exist, and they prevent movement because they are made of solid metal. By contrast, a “constraint” on variation is a lack of variation, a non-existent thing. A constraint is not a cause: it is literally made of nothing and it is invoked to account for a non-event. Second, how can nature be found lacking? What does it mean to say that something is missing? We are comfortable saying that a pie is missing a slice, because we are safe in assuming that the pie was made whole, and someone took a slice. We say that the bridge is “missing a piece” because we know the intention is to convey vehicles from one side to another, which won’t be possible until the road-bed connects across the span. We are comparing what we observe to some normative state in which the pie or the bridge is complete.

So what does it mean when we invoke “constraints” in a natural case? Isn’t nature complete and whole already? What is the normative state in which there are no “constraints”. Apparently, when people invoke “constraints”, they have some ideal of infinite or abundant variation in the back of their minds.

We can do better than this

How do we think about the role of variation in evolution? Above I reviewed some of the conceptual frameworks and metaphors that have guided thinking about the role of variation.

The architects of the Modern Synthesis argued literally that selection is like a creative agent— a writer, sculptor, composer, painter— that composes finished products out of the raw materials of words, clay, notes, pigment, etc. They promoted a doctrine of “random mutation” that seemed to suggest mutation would turn out to be unimportant for anything of interest to us as biologists.

The “raw materials” metaphor is still quite dominant. I see it frequently. I would guess that it is invoked in thousands of publications every year. I can’t recall seeing anyone question it, though I would argue that many of the publications that cite the “raw materials” doctrine are making claims that are inconsistent with what “raw materials” actually means.

A minor reaction to the Modern Synthesis position has been to argue that variation is not random. As noted above, simply saying that mutation is non-random doesn’t get us very far, so advocates of this view (e.g., Shapiro) are trying to suggest other ways to think about the role of non-random variation.

For a time, the idea that “constraints” are important was a major theme of evo-devo. One doesn’t hear it as much anymore. I think the concept may have outlived its usefulness.

Notes

1. According to Wikipedia, these are raw materials for making perfume, including (from top down, left to right) Makko powder (抹香; Machilus thunbergii), Borneol camphor (Dryobalanops aromatica), Sumatra Benzoin (Styrax benzoin), Omani Frankincense (Boswellia sacra), Guggul (Commiphora wightii), Golden Frankincense (Boswellia papyrifera), Tolu balsam (Myroxylon balsamum), Somalian Myrrh (Commiphora myrrha), Labdanum (Cistus villosus), Opoponax (Commiphora opoponax), and white Indian Sandalwood powder (Santalum album). Image from user Sjschen, wikimedia commons, CC-BY-SA-2.5,2.0,1.0; GFDL-WITH-DISCLAIMERS.

November 4, 2014 / Arlin Stoltzfus / 1 Comment

John Tyler Bonner’s Randomness in Evolution (2013; Princeton University Press) is a small and lightweight book— 123 pages, plus a bibliography with a mere 43 references. So, I won’t feel too bad for giving it a rather small and lightweight review, based on a superficial reading. Last year, I was tasked with reviewing Nei’s book and I went way overboard reading and re-reading it, trying to decipher the missing theory that Nei claims to be proving. I even wrote to Nei with questions. He literally instructed me to read the book without pre-conceptions. (Pro tip: when a reader asks you to explain your work, do it— don’t pass the buck).

Bonner’s book is somewhat similar to Nei’s in that it is full of broad generalizations and narrow examples, without enough of the conceptual infrastructure in between; both books offer provocative ideas that are something less than a new theory. The difference is that Bonner is aware of this. His modest claim is simply that certain forms of randomness (which he describes) play an under-appreciated role in evolution.

My first reaction, skimming parts of the book, was annoyance with Bonner’s repeated claims that randomness is essential to Darwin’s theory. This is not correct historically or logically. Darwin’s theory does not depend on variation having any of the various meanings that we normally assign to the term “random” in other contexts (uniformity, independence, spontaneity, indeterminacy, unpredictability), only on it being small and multifarious. Imagine a deterministic mechanism of variation that creates a quasi-continuous range of trait values above and below the initial values, and you can get evolution exactly as Darwin conceived it. Darwin believed that the variation used in evolution was stimulated by exposure to “altered conditions of life”. In this theory of variation-on-demand, adaptation happened automatically. The “random mutation” doctrine came along later, and it meant something (rejection of Lamarckian variation) that Darwin himself clearly rejected.

As I read more of the book, I realized that Bonner’s “randomness” covers several different ideas, one of which arguably justifies his references to Darwin.

In some cases, Bonner’s “randomness” means that different instances of a dynamic system inevitably disperse over a non-zero area of state-space, due to heterogeneity in factors we don’t care about (the technical language is mine: Bonner doesn’t describe it this way). Imagine a local population of genetically identical slime mold cells: expose them to microheterogeneity in the availability of bacterial food sources, and you’ll get heterogeneity in the sizes of cells. Even if you give those cells identical food, after some period of time they will be out of synchrony, and we’ll get a distribution that goes from skinny cells that just divided to fat ones that are about to divide.

I’ll call this flavor of randomness “predictable dispersion” or “reliable dispersion”, noting the relationship to arguments of McShea and Brandon. Bonner argues that reliable stochastic dispersion in morphology and other gross features plays an important role in life cycles, e.g., when certain slime molds form a fruiting body, the skinny cells go on to become stalk cells, and the fat ones become spore cells. This is an interesting argument, but is not developed in full detail. This particular kind of “randomness” is suggestive of Darwin’s theory, though it isn’t what Darwin meant by “chance”.

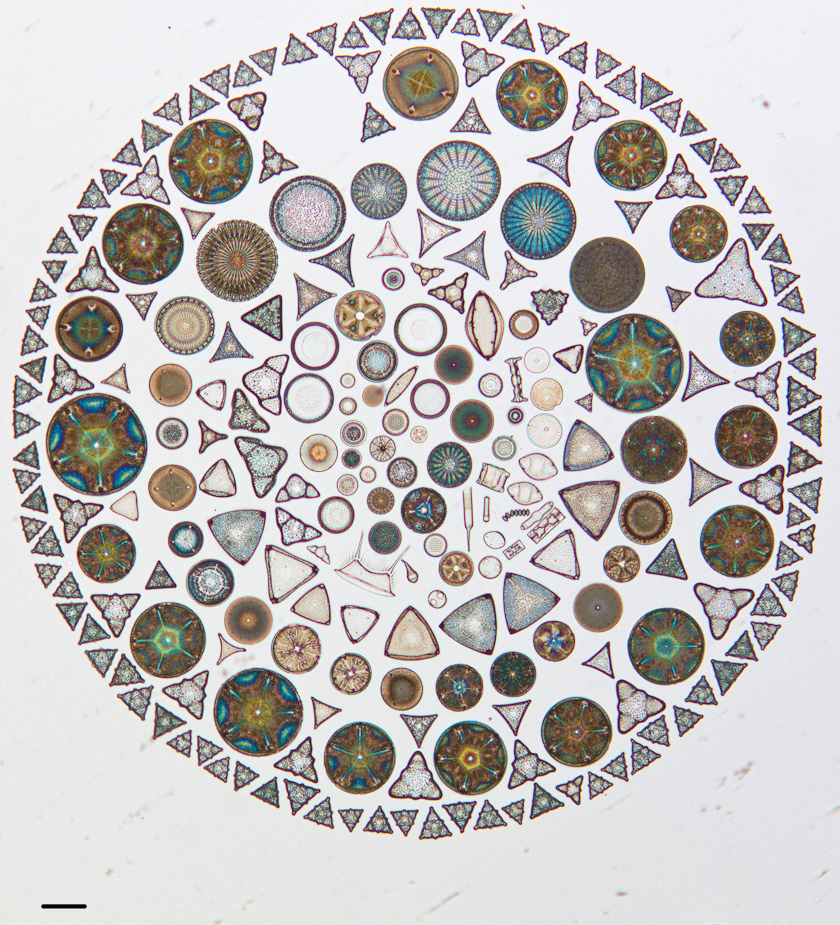

Elsewhere, Bonner is simply invoking neutral evolution, e.g., neutral evolution of morphology. In considering a group of marine planktonic organisms such as the foraminifera or radiolaria or diatoms, with literally thousands of morphologically distinct species (note the radiolarians on the cover, from Haeckel’s drawings), he finds it unfathomable that all of this morphological diversity is adaptive. He argues quite reasonably that there is much more habitat heterogeneity in terrestrial than planktonic environments, yet these are largely planktonic marine organisms, often cosmopolitan, which argues against local niches. This is about as far as the argument goes.

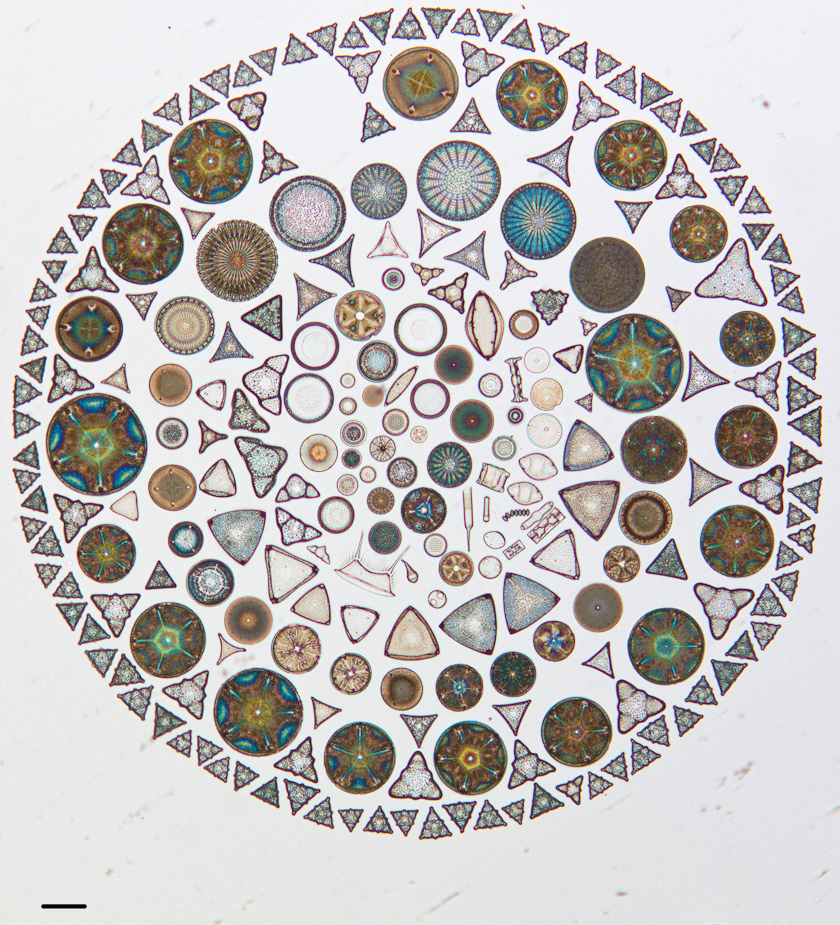

Diatoms artfully arranged on a microscope slide (scale bar, 100 microns; Cal Acad Sci collection, https://www.flickr.com/photos/casgeology/sets/72157633997313366)

It seems to me that a cosmopolitan distribution argues against this thesis. If these organisms are not segregating a niche, competitive exclusion would come into play and reduce diversity. At the risk of sounding like an adaptationist Pollyanna, I would wager that there is a constant differential sorting of planktonic organisms (due to subtle differences in temperature, current, viscosity) in such a way as to preserve a diversity of morphologies, even when the system seems well-mixed on a larger scale. Every time a squid swims by, I reckon, the patterns of turbulence sort planktonic critters in reliable ways that bring different resources to differently shaped species. Even if such an adaptive hypothesis is true, Bonner’s argument remains relevant in the sense that (in my scenario) selection is blind to every aspect of morphology that looks interesting to us visually (and only cares about the effect on mechanical sorting).

Tip vortex of a small aircraft (wikipedia: turbulence)

Bonner also makes an argument about size and randomness that was not very convincing. However, he makes it clear that he is not expecting us to be convinced by such arguments. He wants only to inspire further thought. I suppose he has succeeded, in my case, but I wish he had gone further to propose more specific hypotheses, and to outline a research program.

What thoughts has Randomness in Evolution inspired? My main thought is that we need to stop using “chance” and “randomness” so casually, and start making meaningful distinctions. Like Bonner— who frequently juxtaposes different ideas under the theme of “randomness”, and jumps jarringly from Darwin to Wright to Kimura to Lynch— we often use these terms to cover a wide array of concepts, and it isn’t helpful.

And please do not refer to drift as “Wright’s idea”. The idea of neutral changes is generic and had been kicking around much earlier. Early geneticists such as Morgan proposed the more specific idea of random changes in gene frequencies, and also the (slightly different) idea of random fixation of neutral mutations. If we are looking for early advocates of the importance of drift, then according to what I’ve read (e.g., Dobzhansky’s account), we should honor a couple of Dutch geneticists, Arendt and Anna Hagedoorn, based on their 1921 book. Wright merely introduced the distinction “steady drift” = selection and “random drift” = drift, which degraded into simply “drift” to mean random drift.

The role that drift plays in Wright’s signature “shifting balance” theory, which came along later, is distinctive. Wright did not introduce “randomness” in order to explain dispersion or unpredictability in the outcome of evolution. To the contrary, his idea was to leverage drift in a scheme for improving search efficiency. The role of drift in Wright’s theory is analogous to the role of heat in simulated annealing. This and similar meta-heuristics used in optimization methods allow the system to explore solutions worse than the current solution, a property that reduces the chance of getting stuck at a local optimum, and (when properly tuned) ultimately increases optimization. Wright assumed that evolution was a very good problem-solving engine, and that it must have some special features that prevent it from getting stuck at local optima. He proposed that the special optimization power of evolution comes from dividing a large population into partially isolated demes, each subject to stochastic changes in allele frequencies. The intention of this scheme was to make evolution more predictable, more adaptive and more reproducible.

Kimura was doing something entirely different, trying to solve a technical problem in the application of population genetics theory, which is that the rate of molecular evolution is high and constant, and seems incompatible with the projected population-genetic cost of selective allele replacements. The solution was to propose that most changes take place at low cost because they are due to the random fixation of neutral alleles. Kimura was deeply committed to his theory and defended it to his death, but I don’t think the random character of fixation by drift had any particular importance for him.

These ideas are different, again, from Lynch’s thesis. The explanatory target of Lynch’s thesis is not the unpredictability of evolution, or an excess of unpatterned diversity. Instead, Lynch purports to have discovered a pattern, and a drift-based explanation for that pattern, that takes much of the mystery out of genome size evolution. Where previously we saw anomalous differences in genome size, we now (according to Lynch) see a widespread inverse correlation between genome size and population size, and we have a hypothesis to explain that correlation based on drift (in combination with a tendency to gain mobile elements). In context, drift is responsible for a directional or asymmetric effect, because it is stronger in smaller populations.

My last comment, not stimulated by Bonner’s book, is that we really should reconsider what we mean by “randomness”, which I think is dispensable. I do not say this because I have a secret belief that everything happens for a reason. The problem with randomness is that everyone invokes it as if, by calling a process “random”, we are diagnosing some observable property of that process.

I think it is hardly ever the case that “randomness” properly belongs to the thing alleged to be random. Where “randomness” connotes chance or independence, it is always a matter of one thing relative to another. Calling something random is like calling something “independent”— it immediately prompts the question “independent of what?”.

In other cases, I think “random” is used as a heuristic, a kind of epistemological (methodological) perspective on factors. A random process or factor is one that we do not care about— something we have placed in the category “unimportant”. The process may rely on causes that are perfectly deterministic, and its behavior may be predictable and highly non-uniform, but if we don’t care about it, we assign it a stochastic variable and call it “random.” Is movement random? That depends. If we are tracking wolves with radio collars, we care about the day-to-day movements of individual organisms. The movements aren’t “random”. If we are modeling the formation of a slime-mold fruiting body, we no longer care about the day-to-day movements of individual organisms. They are random. In reality, they are no less deterministic or predictable than the movements of wolves, but we don’t care about them.

Once you start caring about a factor, it is no longer random. Once a factor is in the “important to me” category, we see that there are source laws that determine its behavior, and consequence laws that determine the effects of this behavior. Once I got interested in the role of mutation in evolution, I wanted to understand the cause of biases in mutation, and the consequences of these biases on the course of evolution and the doctrine that “mutation is random” meant only that some people still put mutation in the “unimportant to me” category, and these people typically are confused about the source laws and consequence laws of mutation.

October 23, 2014 / Arlin Stoltzfus / 3 Comments

In a recent QRB paper with David McCandlish, we review the form, origins, uses, and implications of models (e.g., the familiar K = 4Nus) that represent evolutionary change as a 2-step process of (1) the introduction of a new allele by mutation, followed by (2) its fixation or loss.

What could be surprising about these “origin-fixation” models, which are invoked in theoretical models of adaptation (e.g., the mutational landscape model) and in widely used methods applied to phylogenetic inference, comparative genomics, detecting selection, modeling codon usage, and so on?

Quite a lot, it turns out. (more…)